The path toward certification by simulation, Part 1: Verification & validation and uncertainty quantification

The movement to cut time and cost of airframe certification is gaining momentum. What are V&V and UQ and how might they support more reliable composites simulation?

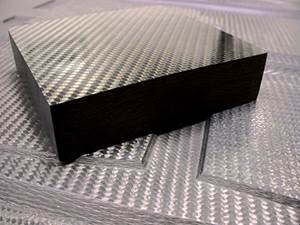

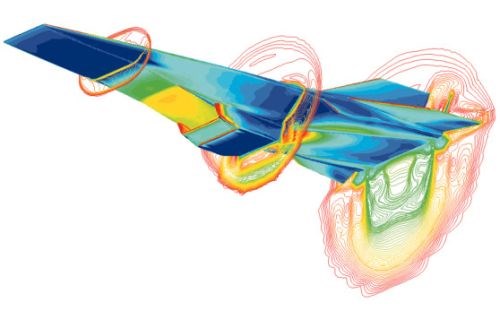

Computational Fluid Dynamics (CFD) simulation of Hyper-X research vehicle airframe moving at Mach 7 with engine operating. SOURCE: NASA Dryden Flight Research.

V&V and UQ are being pursued by NASA, General Motors and many other organizations for a wide range of simulation applications such as CFD, space vehicle reentry, nuclear reactions, seismology, and chemical reactions in Li-ion batteries.

In March HPC’s column, “Accelerating the certification process for aerospace composites,” Dr. Byron Pipes discussed the need to reduce the time and cost for certification by increasing the use of simulation and virtual testing. He suggested one way to achieve this without sacrificing reliability is certification of simulation tools and access to such tools by not just OEMS but throughout the risk-sharing supply chain. As Pipes explains, “This would result in enormous savings because the contemporary approach involves verification and validation testing by each user before the simulations can be trusted.” Dr. Pipes presented part of this vision in “Lightweighting through Composites Simulation” at the 2013 SPE Automotive Composites Conference & Exhibition (ACCE, Sept. 11-13 in Novi, Mich., USA).

What is Verification & Validation?

Verification and validation are both applied to simulation. As explained by Dr. Mark Anderson, Technical Advisor to the National Nuclear Security Administration (NNSA) within the U.S. Department of Energy (DOE, Washington, D.C.), “Simulations are not reality, but rather potentially useful representations of reality. Verification and validation assessment provides evidence for the scientific veracity [or exactness] of these representations.” I quote Dr. Anderson because Byron Pipes has suggested that the composites industry could follow the example set by NNSA, which has no choice but to certify using simulation because physical tests to determine nuclear weapons’ performance are banned.

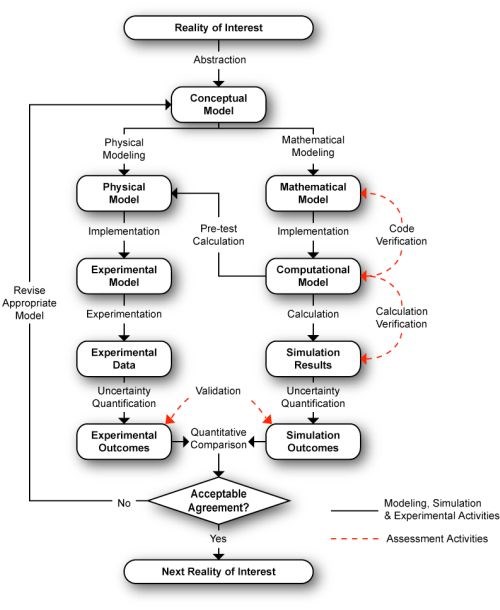

Dr. Barna Szabo is co-founder and president of Engineering Software Research and Development (ESRD, St.Louis, Mo., USA), a professor of Mechanics retired from Washington University (also in St, Louis) and has written several texts on finite element analysis (FEA), a common type of simulation used in composite structures. He defines Verification as the control of approximation errors and includes computer code verification, numerical solution verification and also verification of input data. Validation he defines as quantitative assessment of the predictive accuracy of a mathematical model, typically by comparing simulation results with experimental data. Anderson’s diagram below illustrates the difference between the two. Both Anderson and Szabo agree that verification is a prerequisite to validation.

SOURCE: Mark Anderson, Los Alamos National Laboratory

Technical Advisor to the National Nuclear Security Administration

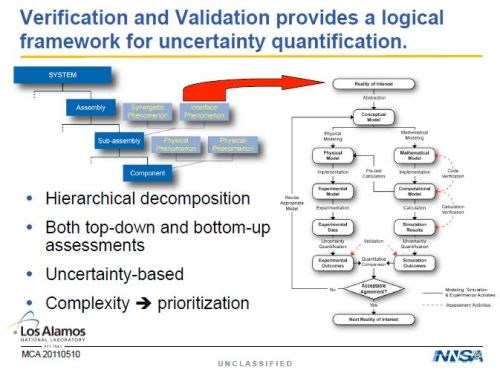

Uncertainty Quantification

According to Anderson, “uncertainty” is the common language in defining how “approximately equal” simulations are to scientific reality. The diagram above was taken from the slide below. Notice how the NNSA’s hierarchy of component, sub-assembly, assembly and final system is practically identical to the pyramid for certifying composite structures in an airframe. Pipes defines Uncertainty Quantification (UQ) as an established methodology to predict the range in expected simulation outcomes. He adds, “UQ can combine simulation and experiments to reflect the actual range in expected performance and thereby assure confidence in performance with fewer experiments.”

SOURCE: Mark Anderson, Los Alamos National Laboratory

Technical Advisor to the National Nuclear Security Administration

When I asked Anderson to define UQ, he responds, “If you do a simulation on a particular part with a given material, you get a single answer. In the real world, that part will not be exactly what you modeled. The fibers may be laid at a slightly different angle, the interstitial space may be slightly different, the resin to fiber interface may be slightly different, etc. In UQ, you model this variability, not point-to-point in a given part, but part-to-part. For example, you vary the modulus and run another simulation. Now you begin to quantify that variability.”

He continues, “Within simulation, you have several different types of uncertainty: parametric uncertainty (e.g., Young’s modulus, yield strength, boundary conditions, etc.) and model form uncertainty, in that the models are typically an approximation based on theory and experimentation. You also have measurement uncertainty, which is the deviation inherent in testing and collecting data. To gain an understanding of your parametric uncertainty, you can vary each parameter and run the simulation over and over and eventually define a “fan of uncertainty” that has boundaries. Model form uncertainty is a more difficult problem. This is approached by doing a lot of small scale experiments to validate and verify your model, and then refining the model. So there is an investment to be made, both in time and money.”

Anderson says there are other industries using UQ. For example, General Motors has used it in crash test simulations and NASA is in the process of incorporating V&V into their simulations. Like NNSA, there are some tests that NASA cannot perform, such as analysis of results in a space environment. They also have some large-structure tests that are similar to those in composite aircraft certification in terms of scale and expense.

Pipes believes that a UQ-based approach to certification is vital for composites to compete with advancing metals technologies and that current testing-based certification — costing $ millions per material — prevents new materials and designs from being adopted. He sees this new certification paradigm as enabling certification with less testing so that it is much quicker and cheaper and would also allow for composition and processing to be adjusted without recertification. Pipes insists that the current empirical approach to manufacturing must transition to science-based simulation because manufacturing is such a large source [perhaps the largest source] of uncertainty in composite structures performance (e.g., Was the fiber actually layed at the specified angle? Did the infusion really achieve the specified porosity, fiber volume and homogeneous resin to fiber distribution? etc.). Pipes asserts that the knowledge NNSA has amassed in the science of UQ could be used by the composites industry to develop this new certification approach. Indeed, just looking at the roadmap and program structure NNSA has used to achieve its simulation-based certification goals could be useful.

Watch for Part 2: UQ Lessons from NNSA.

Related Content

Materials & Processes: Fabrication methods

There are numerous methods for fabricating composite components. Selection of a method for a particular part, therefore, will depend on the materials, the part design and end-use or application. Here's a guide to selection.

Read MoreRUAG rebrands as Beyond Gravity, boosts CFRP satellite dispenser capacity

NEW smart factory in Linköping will double production and use sensors, data analytics for real-time quality control — CW talks with Holger Wentscher, Beyond Gravity’s head of launcher programs.

Read MoreMaterials & Processes: Resin matrices for composites

The matrix binds the fiber reinforcement, gives the composite component its shape and determines its surface quality. A composite matrix may be a polymer, ceramic, metal or carbon. Here’s a guide to selection.

Read MoreComposite resins price change report

CW’s running summary of resin price change announcements from major material suppliers that serve the composites manufacturing industry.

Read MoreRead Next

From the CW Archives: The tale of the thermoplastic cryotank

In 2006, guest columnist Bob Hartunian related the story of his efforts two decades prior, while at McDonnell Douglas, to develop a thermoplastic composite crytank for hydrogen storage. He learned a lot of lessons.

Read MoreCW’s 2024 Top Shops survey offers new approach to benchmarking

Respondents that complete the survey by April 30, 2024, have the chance to be recognized as an honoree.

Read MoreComposites end markets: Energy (2024)

Composites are used widely in oil/gas, wind and other renewable energy applications. Despite market challenges, growth potential and innovation for composites continue.

Read More