Accelerating materials insertion: Where do virtual allowables fit?

In the quest to reduce the time and cost for aerocomposite design allowables development, will conventional physical testing and virtual testing go head-to-head or work side-by-side?

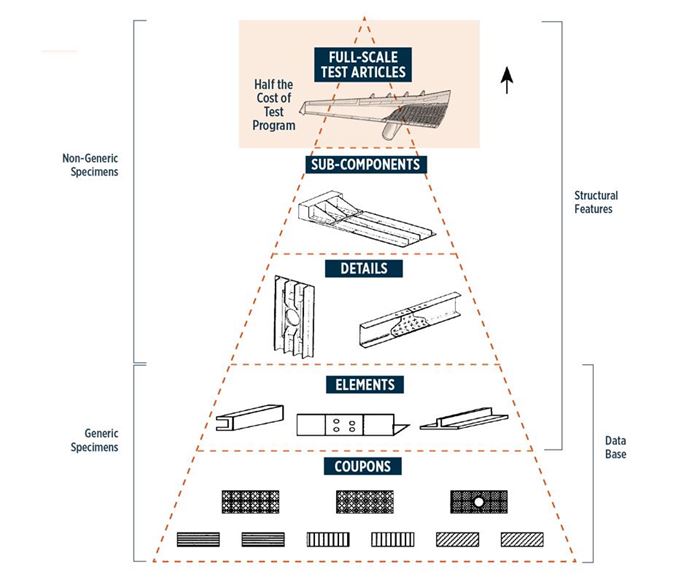

There is an important, ongoing debate in aerospace composites. Critics of the “building block approach” (BBA) used to generate design allowables claim that the required testing of thousands of physical coupons at the materials level (Fig. 1) wastes time and balloons costs to the point that new materials insertion in aerospace programs is overly burdensome.

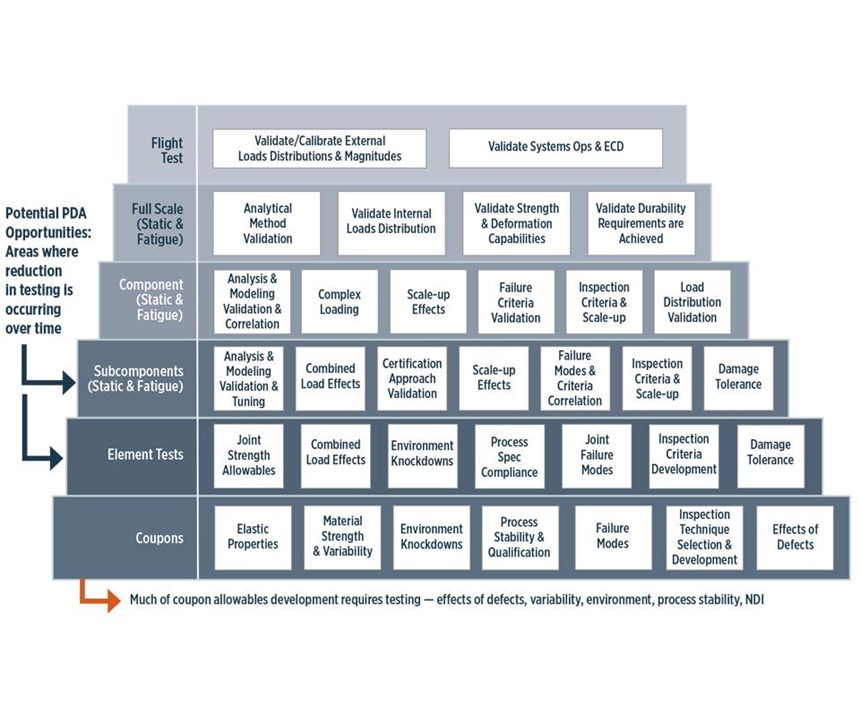

These same critics assert that today’s simulation software is sophisticated enough to virtually duplicate the conditions in physical coupon testing and, therefore, ought to be considered, in some cases, a sufficient substitute for physical testing. This could, at a minimum, abbreviate coupon testing. Opponents contend that the majority of BBA cost is not in coupon testing but, instead, in component and full-scale tests, and that these levels are where the progressive damage analysis (PDA) that undergirds virtual allowables (VA) offers more benefits. Further, they argue that physical testing is, in any case, currently an aircraft certification process requirement.

At CAMX 2015, two presentations in the Accelerated Materials Insertion track took up these opposing views. Roger Assaker, president of software firm e-Xstream engineering (Newport Beach, CA, US), put forward the critics’ case in his presentation, “Virtual allowables approach to accelerate continuous fiber-reinforced polymers development and insertion.” Lockheed Martin Aeronautics’ (Fort Worth, TX, US) senior staff engineer and stress analyst Dr. Carl Rousseau defended conventional building block practice as the author of “Why virtual allowables are not cost-effective.”

It’s important to note at the outset that Assaker and Rousseau agree physical testing will never be completely eliminated, but they diverge when discussing how many physical tests are required and at what level in the BBA pyramid computer-aided virtual simulation could and should be used to reduce that number. Rousseau believes physical testing is the best method for quantifying variability in constituent fibers, resins and manufactured laminates. Assaker asserts variability is easily simulated by using the deviation in fiber and resin properties per manufacturers’ data, while laminate defects from processing can be included in the models and explored via PDA simulations. Each has respect for the other’s position, and though they see value in comparing the time and cost of the two approaches, each contends that his position in such comparisons is often misrepresented with incorrect supporting numerical data that needs to be clarified. There is no disagreement, however, about the priority of aircraft safety, and that current levels of protection must be maintained no matter what methods are adopted to facilitate insertion of new materials into an aircraft program.

CW invited these two experts to this forum, not to emphasize the divide, but instead to examine each side’s assertions, looking for common ground.

Counting the cost of coupons

Speaking first, in defense of BBA practices, Dr. Rousseau contends: “This whole issue of an excessive amount of test coupons required has been misrepresented,” adding that the norm is 1,500-3,000 coupons per material form, and this can be reduced by up to 75% by using extensive data pooling (see “Statistical data pooling for design allowables").

Rousseau points out that coupon testing consumes a relatively small fraction of the overall cost (Fig. 1). “Half of the cost of certification testing is in the full-scale test articles,” he explains, noting that most programs complete two full-scale airframe tests — one static, one fatigue — and that these account for half the BBA program’s cost. “Details and subcomponents are two-thirds of the remainder, so coupon testing may total US$500,000 per material. To us, that’s just cheap insurance.” In other words, unlike metal, a composite’s properties vary based on laminate stacking sequence, lot/batch properties of fiber and resin, laminate manufacturing/ molding process, environmental conditions, etc. Why add uncertainty by modeling when you can physically test to prove and bound actual variability?

Believe it or not, BBA was developed (circa. 1950s, adapted for composites by the 1980s) to reduce the cost of aircraft design substantiation. Instead of testing every part of every structure, the idea was to establish material basis values and use these to calculate preliminary design allowables. Then, based on the structural analysis, critical areas were identified for physical test verification. Thus, by testing greater numbers of cheap, small specimens, large-scale tests were minimized. Technology risks were assessed early in the program and analyses — traditional closed-form (i.e., hand-calcs) and, over time, finite element and other computer methods — were used in place of tests where possible. “At all different stages and scales of development, both testing and simulation are used,” says Rousseau (Fig. 2).

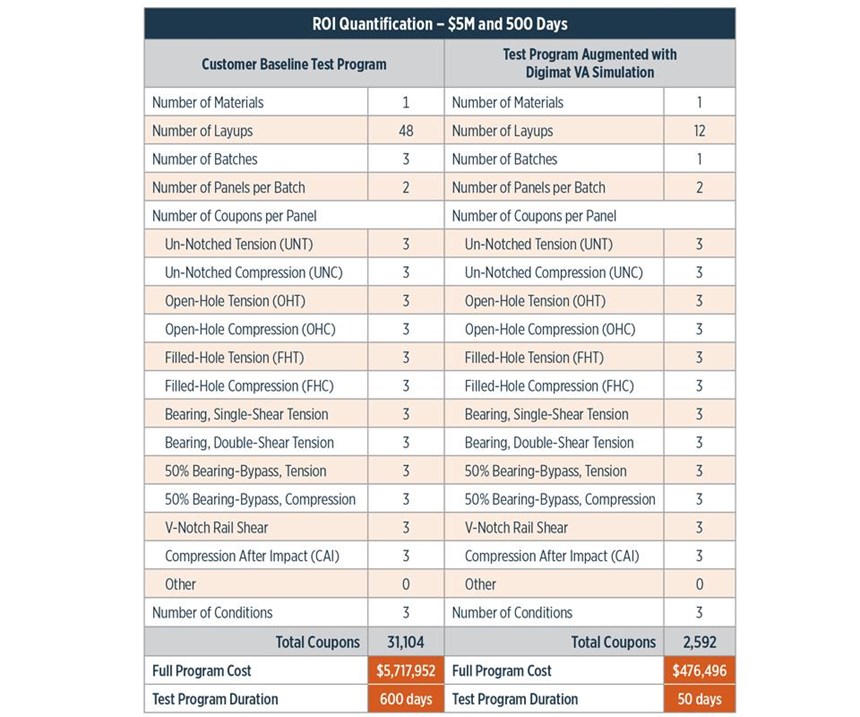

Assaker sees some common ground here, but points out that Rousseau’s conservative cost estimate for coupon testing is based on an unspoken assumption. “We agree that more of the cost is in the full-size articles testing,” he says. “However, we estimate US$5.7 million for all testing to generate allowables for a completely new material [Fig. 3]. Only for materials that are well known,” he emphasizes, “can you reduce the cost [as Rousseau asserts on p. 27] to US$500,000. Our approach [for a new material] is to reduce the US$5.72 million to US$476,500 for statistical physical testing, and do the rest via simulation.”

“I agree that if you have to test 31,000 — or even 10,000 — coupons, then your test program cost will be in the US$5 million range or above,” Rousseau responds. But he appeals to standards and expectations set by the National Center for Advanced Materials Performance (NCAMP, Wichita, KS, US), which works with the US Federal Aviation Admin. (FAA, Washington, DC, US) and industry partners to qualify material systems and CMH17-1G, the latest revision of the Composites Material Handbook. NCAMP’s standard test matrix, he points out, is a much lower 1,200 coupons, “and if you add up the various recommended test matrices in CMH17-1G, the total count is roughly 5,000-7,000.”

At this point, Rousseau specifically compares BBA to VA, as presented by Assaker (Fig. 3). Noting the large number of different layups there, he says, “I don’t agree that 48 laminates would be required to test a new material.” Rousseau explains, “I use six laminates for tape and three for a fabric, plus three different thicknesses of only one laminate for compression-after-impact (CAI) testing.” Rousseau’s method reduces the number of laminates to 12, and the total coupon count from 31,104 to 7,776.

To Assaker’s dichotomy between new vs. well-known materials, Rousseau replies, “I consider ‘well-known’ to be any composite with continuous fiber, in tape or a 2D weave, in a polymeric matrix.” This covers the entire landscape of materials used in automated tape laying (ATL), automated fiber placement (AFP), as well as out of autoclave (OOA) and autoclave-cured prepreg and dry reinforcements impregnated and molded using resin infusion processes. Not included are 3D preforms used in resin transfer molding (RTM) and parts reinforced with discontinuous fibers, such as those made with molding compounds. Assaker reiterates: “I chose 48 laminates to explore the design space for a completely new material.”

Rousseau and Assaker concede that apples-to-apples comparisons here are difficult because actual cost and potential savings in a physical testing program vary depending on the part and materials. Although they agree that physical testing is required, Assaker asserts that simulation can reduce the need for physical testing and that what remains can be done more strategically. “Our view is that the virtual testing would drive the statistical data pooling that companies like Lockheed already use,” Assaker adds, “making the allowables process and associated testing more efficient.”

Cost vs. time

Assaker’s Fig. 3 is a rebuttal to the quantitative comparison in Rousseau’s CAMX 2015 presentation which tried to compare BBA to VA in more concrete terms. Assaker concedes it is based on a number of arguable assumptions — e.g., dollar values can easily vary because different organizations do stress analysis differently.

Assaker contends that Rousseau’s conclusions are premised on at least one incorrect assumption. “His cost conclusions are true if you assume that the VA test coupons are built in the FEA code by hand, from scratch, every time,” says Assaker. “But this is exactly what our solution was developed to streamline.” To give a quick background, Assaker is talking about the Digimat software, which he says comprises FEA technology, composites expertise and failure models.

Rousseau says he assumed the FE model would be built once per test configuration (compression, tensile, CAI, etc.), “but each time the model is run, you have to change the MAT and PSHELL cards for material properties, lay-up and/or environmental condition.”

Assaker responds, “We don’t build the model once per test configuration for each material because it is already done; we built each test configuration’s model into the software. These are standardized tests. By definition, they cannot change. Modifying the MAT and PSHELL cards is also automated.” He explains the issue is not complexity — the coupons are very simple, with elementary loading conditions — “but you have to do a lot of them and there is complexity in the damage analysis underneath.” Assaker says e-Xstream worked with allowables engineers to program the mechanics into the software so that the user simply enters the unique parameters per case.

Despite all the talk thus far, Assaker contends that the impetus for development of his company’s virtual testing capability was not cost, but time. “Engineers came to us frustrated at the long time periods required for testing before they could begin the design process,” he explains. “Our objective was to allow an engineer to quickly compare material 1, 2 and 3, for example. We wanted to shorten coupon testing to six months or less.”

But Rousseau responds, “In my experience, no one waits on testing to begin the design process. I can readily estimate properties required to begin design. Since all of the other design development tasks take longer than coupon testing, I can always have the final allowables done prior to drawing release.”

Assaker counters that he is suggesting use of VA instead of estimated properties.

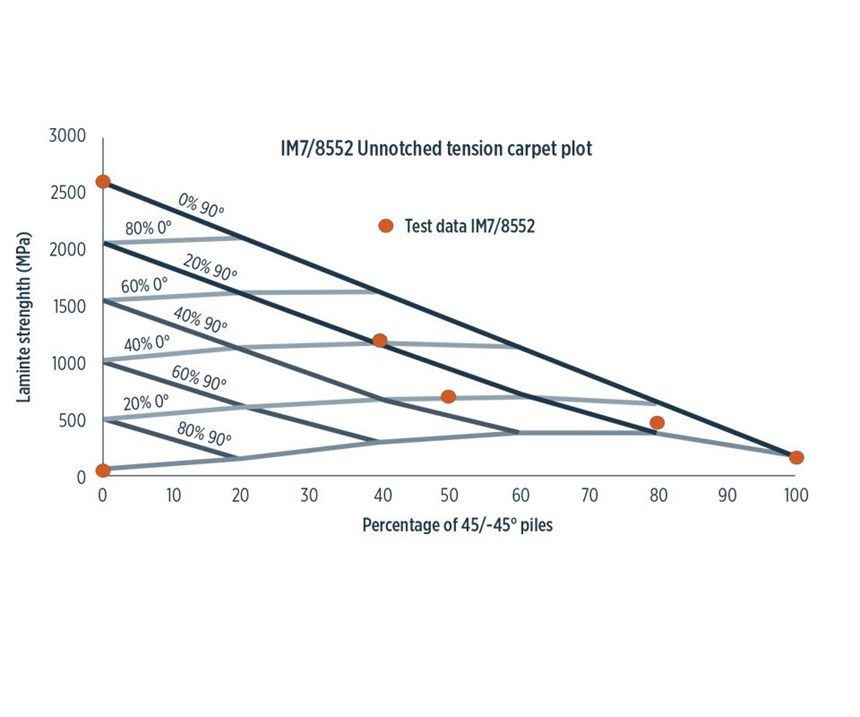

It is important to note here that definitions matter. Because the ways BBA and VA are inherently set up, this is a somewhat apples- to-oranges comparison. Physical testing is used as the input data for VA, and thus, is mainly performed for lamina (ply level) — not laminates (layups) — at 0°, 90° and 45° (shearing tests). A small number of physical tests on laminates are performed for verification (Fig.4). Using the lamina data, the software automatically builds laminates, sets up test coupons and conditions and then runs the test matrices (e.g., compression, tension, OHC, notched, CAI, filled hole, etc.) for various environments (room temperature ambient, hot/wet, etc.). Assaker adds, “We calculate all of these laminate properties by simulation and test only at the red dots (Fig. 4) to validate our results.” The software then solves for allowables. Assaker contends this doesn’t require the two months projected in Rousseau’s CAMX paper, but a matter of weeks after lamina test data are received. And because lamina data are simplified, this testing reportedly can be achieved more quickly than Rousseau’s projected 18 months.

Rousseau clarifies that his 18-month testing period includes the time for panel fabrication, machining, wet-conditioning and static testing at cold-temperature ambient, room-temperature ambient and elevated-temperature wet conditions, plus report-writing. “Wet conditioning alone takes two months, regardless of how many or few specimens you test,” he points out. “In my opinion, all of this physical laminate testing will take a minimum of 9 months, with 18 being a conservative estimate.”

Assaker, however, explains that VA uses a formula to calculate wet laminate properties from the properties for dry laminates already developed from lamina testing. This formula is based on the underlying physics and damage progression of the environmental conditioning process. Rousseau counters that there are better fits for this simulation technology than in generating allowables. “This specific type of simulation enables more modeling and less testing of structural failure events, including failure with various sorts of assumed damage or manufacturing flaws. These are required for certification but are expensive to replicate experimentally.” He notes that PDA-based simulations are also useful for modeling complex failure mode interactions at the assembly level (elements, components, full- scale test articles, etc.). “This makes more sense than trying to replace coupon-level design allowables.”

But can virtual testing be used to help speed up the insertion of new materials? “Absolutely,” says Rousseau. “Once you get into non-laminated processes like 3D preforms, resin transfer molding (RTM) and injection molding with discontinuous fibers, all of the methodology we use for design allowables on fiber-placed airframe parts — which are fairly flat and behave basically like shells or plates — breaks down.”

Assaker agrees and says using VA at the more complex levels is already on e-Xstream’s roadmap, “but we need to start with the coupon level because we have to show accuracy and earn industry confidence before testing by simulation can be accepted at these higher levels.”

Assaker also agrees with VA’s potential role with RTM and short-fiber composites. “We don’t have to be relegated to non-traditional laminates [NTL], but we handle them quite well.” He notes that e-Xstream has been working with the auto industry on chopped fiber parts for 13 years, and can analyze them with as much accuracy as continuous fibers. “We simulate both the part and the process in order to address issues like fiber orientation and porosity.”

However, Assaker points out that for automotive, “we move to direct engineering of parts, which is a very different approach from the current step-by-step BBA process in aerospace. In order to make changes where BBA methodologies break down, I believe it is necessary to first show how they break down and possible solutions.” For example, in a directly engineered RTM part, observed defects, such as porosity, are introduced in the simulated laminates. “We can then study the knockdown factors in resin-rich and resin-poor areas,” he explains. “For short-fiber parts, we follow this same process, because you basically have a different laminate at each point in the material due to changes in fiber orientation.” He acknowledges that there is no CMH-17 equivalent for testing short-fiber materials.

But Assaker sees value in virtual testing’s capability to expand the design space (e.g., by examining hybrid layups and mixtures of product forms, such as tape inner laminate with fabric outer plies). He says physical testing for this is impractical because the number of layup combinations grows significantly. “Virtual testing can provide high-quality information early, guiding design and physical testing to the most promising layups,” Assaker says, adding that the current allowables methodology, which is basically ‘black metal’ design, might be sufficient for conventional layups (0°/±45°/90°), but increasing the performance of composite structures might require novel designs, such as asymmetric laminates with low angles (20°, 30°, etc.) and different manufacturing techniques, such as steered fibers. “Determining the performance of NTLs will become increasingly important.”

A matter of semantics?

Rousseau says the consensus among his peers at the American Society of Composites 31st Technical Conference (Sept. 19-22, 2016, Williamsburg, VA, US) was “there is no use for VA in certification.” He concedes, “we do believe it is very useful in the pre-design and design development process. For allowables, you simply cannot simulate the statistical data required.” Assaker believes that VA can capture more variability than BBA: “BBA uses three batches of material. We do the same by randomly picking different resin and fiber properties within the range of values given in the material specification and/or lamina physical test data. Therefore, we can test three batches or 100 simply by changing one variable in the software from 3 to 100. The range in data was already input at the beginning. The only change is computer crunching time.” In the future, he sees the possibility to input all of the data from an OEM’s receiving procedures — e.g., testing and/or review to verify that incoming materials comply with OEM material specifications — into the model. This would integrate the power of Big Data, which is already used by manufacturers to reduce variability, off-spec parts and waste.

Assaker contends, “The argument between virtual and physical testing in the development of allowables has been presented as a false choice between one or the other. VA is interpreted as using simulation results directly in the production of design allowables for certification. We see certification as separate. What we’re proposing is simulation to help reduce the number of allowables tests they need to do.” Rousseau agrees, “The best way to use the simulation/VA tools is as a more efficient way to design a test program. I see these as complementary to the traditional allowables methods, not competitive.” Assaker says experts like Rousseau can play a key role in helping to refine VA and move it forward to make the allowables process more informative and efficient.

Related Content

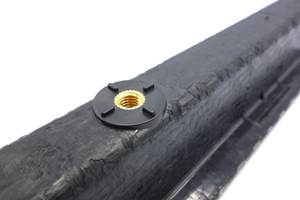

Price, performance, protection: EV battery enclosures, Part 1

Composite technologies are growing in use as suppliers continue efforts to meet more demanding requirements for EV battery enclosures.

Read MoreRobotized system makes overmolding mobile, flexible

Anybrid’s ROBIN demonstrates inline/offline functionalization of profiles, 3D-printed panels and bio-based materials for more efficient, sustainable composite parts.

Read More9T Labs, Purdue University to advance composites use in structural aerospace applications

Partnership defines new standard of accessibility to produce 3D-printed structural composite parts as easily as metal alternatives via Additive Fusion Technology, workflow tools.

Read MoreTU Munich develops cuboidal conformable tanks using carbon fiber composites for increased hydrogen storage

Flat tank enabling standard platform for BEV and FCEV uses thermoplastic and thermoset composites, overwrapped skeleton design in pursuit of 25% more H2 storage.

Read MoreRead Next

CW’s 2024 Top Shops survey offers new approach to benchmarking

Respondents that complete the survey by April 30, 2024, have the chance to be recognized as an honoree.

Read MoreFrom the CW Archives: The tale of the thermoplastic cryotank

In 2006, guest columnist Bob Hartunian related the story of his efforts two decades prior, while at McDonnell Douglas, to develop a thermoplastic composite crytank for hydrogen storage. He learned a lot of lessons.

Read More

.jpg;maxWidth=300;quality=90)